I’ve been wanting to talk about this topic for a while. Legitimacy in AI development is widely discussed—there’s rich scholarship on authority, standing, and community voice from participatory design to Indigenous data sovereignty. What I don’t see getting adequate attention in mainstream AI ethics conversations is the fundamental distinction between legitimacy that developers claim for themselves versus legitimacy that communities confer on them. It’s the difference between ‘we are legitimate because we followed the principles’ and ‘they have given us standing to proceed.’

I want to clarify that this is not an article on different forms of legitimacy. It’s discussion-starter asking “who gets to decide” what legitimacy is in the context of ethical AI. Nor does it propose frameworks or solutions: the great challenge of making the right voices heard is for another day. Finally, it sits within the specific context of large, socially-impactful systems such as the ones I mention below.

I hope you find it interesting and you find parts that resonate or provoke thoughts that you can share with me.

Legitimacy and trust

Though interrelated concepts, trust and legitimacy are obviously not the same thing. A key differentiator that I want to focus on here is on locus of control:

Trust: provisional acceptance or belief in the competence and good faith of another, is a sentiment held by a person or group (we have trust in you/I am trusted by them).

Legitimacy: authority/standing or acceptance, often based on shared ethical, procedural and sociocultural principles, is a property conferred by one party to another (I grant you/your project legitimacy).

It is possible, common in fact, for a population to have trust in an organisation without accepting its legitimacy in certain contexts. For example, “I trust my government but I don’t want it making decisions about my healthcare” the government is a trusted institution but is not considered to have the authority or insight to make personal healthcare decisions. The dual presence of trust and legitimacy is required for organisations to establish and maintain a social license to operate.

Why we need legitimacy and how to “get” it

Tech companies frequently invest in “legitimacy seeking mechanisms” to encourage acceptance and engagement from customers and other stakeholders and, in the case of startups, to “overcome their liability of newness“ (Bunduchi et al. 2023). These might include seeking moral legitimacy with detailed “origin stories” (a wellness app founder’s personal history of overcoming ill health for example), or contributing to common cultural norms (sustainable practices or contributing a percentage of profits to charity).

These efforts can appear hollow and manipulative if not executed with sufficient integrity. They can also reportedly yield dividends when companies successfully associate themselves with values like privacy, safety, or social responsibility. Irrespective of the effectiveness of legitimacy seeking mechanisms, their proliferation reveals a growing cognizance of the importance of legitimacy to success, and, underlying this, an tacit recognition that it can’t be derived internally, it must be externally conferred.

Epistemic justice – it’s about the “who”

When it comes to legitimacy, there is the important question of “who”. Who is involved in the development of AI guidelines and systems, and with what authority, has profound implications on the epistemological foundations of the project:

“The, often localized and highly situational, background information required to provide important interpretative context can be difficult to identify without access to the lived experiences of data subjects. This is why the powerful phrase “nothing about us without us”, coined by the disability rights movement, is not just an ethical principle but an epistemic necessity” (Anderson and Marshall 2026)

But not all communities are starting from the same place. Indigenous AI and data sovereignty groups around the world have led the way for years in defining guidelines for projects which use indigenous data or affect indigenous communities. For obvious reasons, indigenous communities have had the dubious advantage of arriving early to the conclusion that the dominant authorities do not necessarily share an understanding of important historical facts, moral codes, social norms, cultural knowledge and lived experiences that inform their lives and make a material difference to the way in which new systems will be used by and affect them.

Artefacts such as the indigenous data sovereignty principles, collaboratively developed by indigenous communities across the world, and associated practice guides are the mechanism by which developers can achieve alignment, laying the groundwork for a conferred legitimacy and necessary standing to pursue the project. They do this in two important ways:

- By establishing the indigenous communities’ authority in relation to the data that represents them and systems that affect them; and

- Defining the standards of practice and protocols for developers, governments and organisations, which can assist to confer legitimacy (albeit provisional) to a project.

The claim to authority is important. It speaks to legitimacy from the under-discussed perspective of “standing” and “ground truth”. It refers to aspects of moral and cultural legitimacy, but also speaks to the primacy and uniqueness of impacted communities in making decisions based on moral and epistemological ground truths. This is key to any interpretation of ethical AI practice. It articulates a deep-rooted understanding we all share, going back to our earliest days of social interaction, that gives rise to that sense of indignation and disempowerment when we have been transgressed.

The legitimacy language gap and epistemic injustice

It surprises me how little mention there is in the dominant AI legislation and ethics frameworks of “legitimacy”. AI legislative and compliance frameworks worldwide seem to focus extensively on procedural compliance (transparency, accountability, fairness, risk assessment) but remain largely silent on questions of legitimacy, and in particular who has the authority to confer standing to develop and operate AI systems in our communities. This is potentially because the language of conferred legitimacy is simply not there. Instead we fall back on requirements for “stakeholder engagement” , “participatory design”, “transparency”, “explainability” and so on. For example, the NIST Artificial Intelligence Risk Management Framework [AI RMF 1.0] has this to say about trustworthiness (my emphasis):

“For AI systems to be trustworthy, they often need to be responsive to a multiplicity of criteria that are of value to interested parties. Approaches which enhance AI trustworthiness can reduce negative AI risks. […] Characteristics of trustworthy AI systems include: valid and reliable, safe, secure and resilient, accountable and transparent, explainable and interpretable, privacy-enhanced, and fair with harmful bias managed. Creating trustworthy AI requires balancing each of these characteristics based on the AI system’s context of use. While all characteristics are socio-technical system attributes, accountability and transparency also relate to the processes and activities internal to an AI system and its external setting.“ (Tabassi 2023)

This is good guidance. It strongly ties trustworthiness with stakeholder values and context of use, but there is no guidance on who gets to identify the interested parties and how their preferences are prioritised, or who says what is “fair”, or “harmful”.

A recent systematic review of AI governance and ethics literature found 17 prevalent principles across global AI governance frameworks (Corrêa et al. 2023). Legitimacy or standing as a qualifying principle was not among them. The absence of this language reflects a fundamental gap that may explain the wide variability in the outcomes of AI projects despite the plethora of available guidelines and legislation.

Without formal consideration of legitimacy , there is no requirement to properly assess whether developers have access to the epistemological reference points needed to establish metrics of fairness and bias, make detailed decisions about the selection and treatment of data and properly assess the projects risks, potential impacts and worthiness.

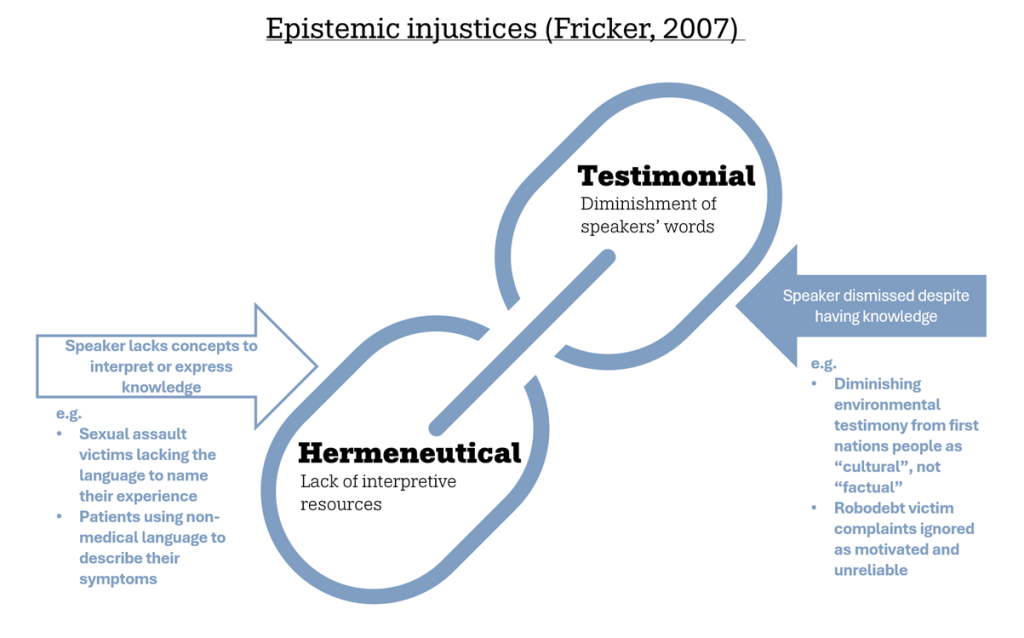

Two key forms of epistemic injustice highlighted by Miranda Fricker (Fricker 2007) are hermeneutical injustice, affecting groups with a lack of interpretive resources, and testimonial injustice, whereby prejudice causes the “hearer” to diminish the words of the speaker. Researchers are increasingly applying Fricker’s framework to AI, exploring the ways in which systems perpetuate epistemic injustices (Kay et al. 2024; Barry and Stephenson 2025) and how algorithmic opacity facilitates both testimonial and hermeneutical harms (Milano and Prunkl 2025).

Figure 1 A depiction of the complementary harms of Fricker’s two forms of epistemic injustice

Prejudice (pre-judgement) means forming an opinion ahead of actual experience or knowledge. No malice of intent is required for prejudice to define which voices and epistemic ground-truths are of value, but the effects on affected communities are profound. These two forms of epistemic injustice are the one-two punch that repeatedly hits the same marginalised communities by producing systems they are less equipped to beneficially navigate and who’s insights and experiences are discounted, if not entirely unheard, throughout the AI system lifecycle.

An example in Australia’s recent past is the Federal Government’s automated welfare debt recovery system, nicknamed “Robodebt”, which falsely accused thousands of welfare recipients of owing debts for overpayments. Hermeneutical injustice was inflicted by putting the onus on the already marginalised and vulnerable welfare recipient to deconstruct technocratic debt recovery notices and mount their own defence, and for years the algorithm and its sponsors had primacy over and downplayed the voices of affected and concerned citizens.

Widespread access to generative AI systems threatens to make the landscape of injustice (permeated by the dominant western cultural norms of its designers) diffuse, hard to police, and near-universal. Kay and Mohamed give an illustrative example in the erasure of cultural differences in the facial expressions of photographs generated by the Midjourney system, generating faces from all around the world with the same “American smile” (Kay, Kasirzadeh, and Mohamed 2024). As they state, the stakes are increasingly high as generative AI systems become the dominant epistemic tool by which we learn about the world around us.

Towards community-conferred legitimacy

Indigenous communities have long known the power of that one-two punch, and, as discussed, have led the way with indigenous data and AI sovereignty frameworks (Hudson et al. 2023; Kukutai 2023; Kukutai and Taylor 2016) that establish community authority over the data and systems affecting their communities, and provide the frameworks to confer legitimacy to specific projects and within stated bounds.

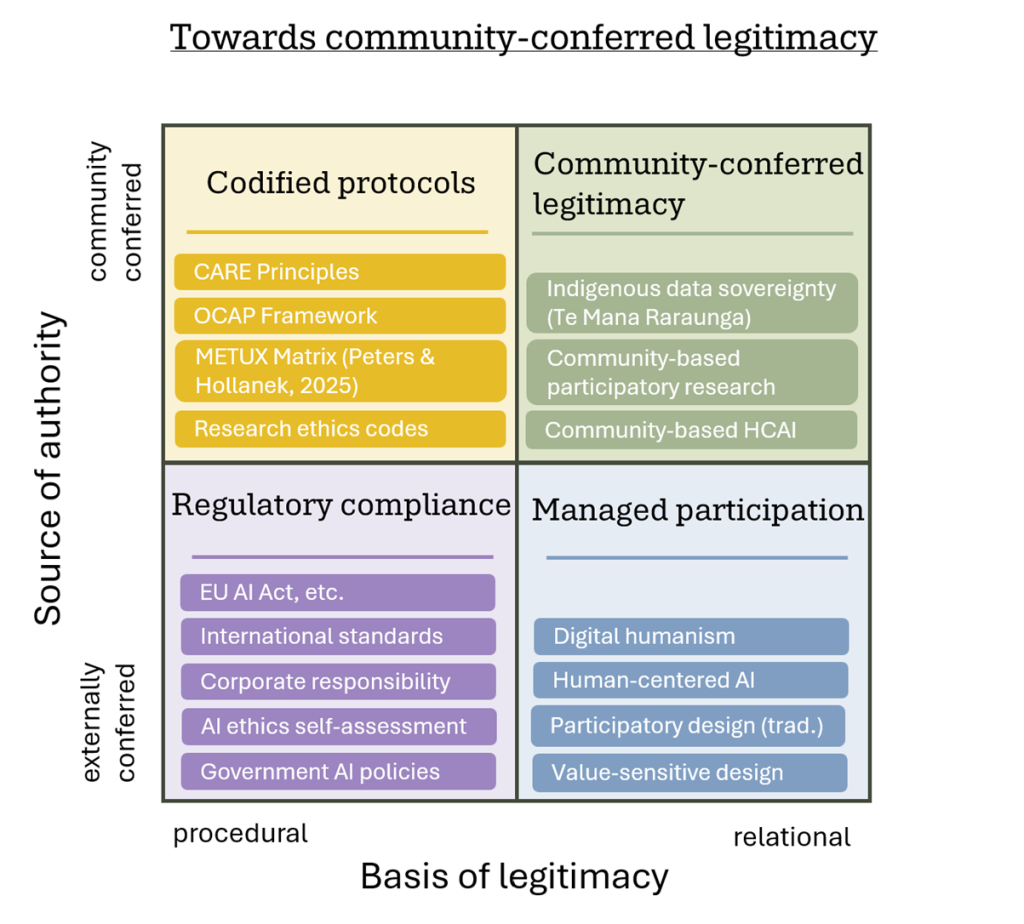

Adding to the discourse are a growing body of more inclusive approaches to AI research & development such as community based participatory design (CBPD), Digital Humanism and Human-centered AI (HCAI). These “Managed participation” approaches (Figure 2 below) prescribe a more in-depth relationship with affected communities and, though not explicitly stated, reach towards establishing a conferred legitimacy on relational grounds by looking for congruence and alignment on key measures. These approaches are an important bolster to the established body of regulatory and compliance artefacts (“Regulatory compliance”). They help developers to engage with affected communities, but, in the most part, developers retain the authority to define the parameters and measures of success (like what counts as “ethical”, “fair” or “human-centered”; who gets to participate and how).

Legitimacy in this case is claimed on the basis of following the procedures but we all know that “the map is not the territory”(Korzybski and Strate 2023). Korzybski was the first to warn about conflating inferential and descriptive terms, that is, treating inferences as if they were observations. This precisely describes the error we make when claiming that following the steps in a methodology infers legitimacy. Like looking at a map and mistaking it for the territory, we examine the procedures and principles (the ‘what’ and ‘how’) while missing the authority relations (the ‘who’). We’re perfecting the cartography while ignoring that we may not have permission to traverse this land at all.

Legitimacy is conferred, a community can judge us to be legitimate based on their relationship with and knowledge of us, we can’t make that inference from our observations alone. Thus community-conferred legitimacy is a form of relational legitimacy afforded by the quality of the working relationship established with affected communities, the extent to which epistemic differences are understood and injustices are overcome and the recognition that this legitimacy is revokable.

Figure 2 below is an illustrative sample of AI guidelines, mapped across two dimensions: how legitimacy is established (procedural vs. relational) and who confers it (external authorities vs. affected communities).

Figure 2 Sample of ethical AI guidelines along two axes: basis of legitimacy and source of authority, community-conferred legitimacy exists where authority is granted by affected communities through relational accountability (Oguanaman 2019; Peters and Hollanek 2025; Russo and Hudson 2022).

While researchers have been actively exploring epistemic injustice in AI since at least 2022, and emerging participatory frameworks are beginning to operationalize community authority, these insights have not meaningfully penetrated mainstream AI regulation or industry practice. The EU AI Act , NIST AI RMF, and dominant government and corporate responsible AI frameworks continue to treat legitimacy as something developers claim through procedural compliance, not something communities confer through relational accountability. “Codified protocols” have emerged over time which come closer to bridging this gap, but the question remains: why has it failed, so far, to filter through to dominant guidelines and standards? A few possibilities spring to mind:

- The research is received by the development community as yet another demand to consult more with the community, with little consideration of how this will affect timelines and delivery costs; and/or

- Related to the above, the bodies who develop these guidelines are influenced by political and industry pressures to keep guidelines procedural and largely self-policing; and/or

- The language of the professionals that dominate the AI policy landscape does not incorporate, and therefore does not value or know how what to do with, the terms defining epistemic injustice, relational accountability and legitimacy in its various forms (an example of testimonial and hermeneutical injustices in one).

To address points 1 and 2, it is important to clarify that the goal of this and related discussions is not necessarily to increase the volume of consultation but to improve the quality. In fact, to improve the dynamics of the consultations in terms of their scope and richness as well as power. Rather than increasing the workload with more consultation, community-conferred legitimacy asks different questions: do we have the relational foundation and community authorization to be doing this work at all, and does our team bring the right epistemic ground truths to the work?

In her book “The Dynamics of Epistemic Injustice”, Amandine Catala introduces her discourse by positioning it in the frame of standpoint theory and its three main claims that: the socially-situated nature of knowledge affects what each of us knows about the world; non-dominant social groups have “epistemic privilege” meaning they will have access to knowledge of their less privileged world that more privileged groups will not; and that this “epistemic privilege” is not automatic by virtue of membership to a specific group:

“epistemic privilege is a collectively mediated and critically generated achievement.” (Catala 2025)

This concisely encapsulates much of the discussion behind emerging participatory AI research, and the central message of this piece as I try to understand why, despite excellent theoretical and pragmatic progress, it hasn’t made the leap into ethical AI legislative and operational guidance.

A key piece of the puzzle is the dynamic of power and agency implicit in all three possibilities outlined above. Recent scholarship on epistemic justice helps explain this dynamic. Catala refers to epistemic “power-with” as a “kind of epistemic power that lies at the core of epistemic empowerment” (Catala, 2025 p415) arising from subordinating the pervading and dominant language and centering the perspectives and experiences of another community. Similarly, Mayadhar Sethy calls for “a process of epistemic disobedience refusing the singular authority of Eurocentric rationality” to re-frame modern scientific thinking around AI against the epistemological frameworks of indigenous knowledge systems (Sethy 2026).

It is understandable that we in power-dominant societies might view these positions as challenging, possibly destabilising, but it can also be seen as an opportunity to see new possibilities for AI solutions with truly global appeal. When we look back at this watershed period of AI growth, we will reflect on how well power-dominant societies met the moment by shifting the frame of their practice from “power-to” to “power-with”.

References

Anderson, Theresa Dirndorfer, and Ruth Marshall. 2026. “Enabling Diversity and Gender Equity in Human-Centered AI.” In Handbook of Human-Centered Artificial Intelligence, ed. Wei Xu. Singapore: Springer Nature Singapore, 1–59. doi:10.1007/978-981-97-8440-0_104-1.

Barry, Isobel, and Elise Stephenson. 2025. “The Gendered, Epistemic Injustices of Generative AI.” Australian Feminist Studies 40(123): 1–21. doi:10.1080/08164649.2025.2480927.

Bunduchi, Raluca, Alison U Smart, Catalina Crisan-Mitra, and Sarah Cooper. 2023. “Legitimacy and Innovation in Social Enterprises.” International Small Business Journal: Researching Entrepreneurship 41(4): 371–400. doi:10.1177/02662426221102860.

Catala, Amandine. 2025. The Dynamics of Epistemic Injustice: Situating Epistemic Power and Agency. 1st ed. Oxford University Press New York, NY. doi:10.1093/9780197776353.001.0001.

Corrêa, Nicholas Kluge, Camila Galvão, James William Santos, Carolina Del Pino, Edson Pontes Pinto, Camila Barbosa, Diogo Massmann, et al. 2023. “Worldwide AI Ethics: A Review of 200 Guidelines and Recommendations for AI Governance.” Patterns 4(10): 100857. doi:10.1016/j.patter.2023.100857.

FitzGerald, Alex. 2026. “Anthropic Turns AI Ethics Into a Competitive Differentiator.” Medium. https://medium.com/@alex_54871/anthropic-turns-ai-ethics-into-a-competitive-differentiator-2a10d255a519.

Fricker, Miranda. 2007. Epistemic Injustice: Power and the Ethics of Knowing. 1st ed. Oxford University PressOxford. doi:10.1093/acprof:oso/9780198237907.001.0001.

Hudson, Maui, Stephanie Russo Carroll, Jane Anderson, Darrah Blackwater, Felina M. Cordova-Marks, Jewel Cummins, Dominique David-Chavez, et al. 2023. “Indigenous Peoples’ Rights in Data: A Contribution toward Indigenous Research Sovereignty.” Frontiers in Research Metrics and Analytics 8: 1173805. doi:10.3389/frma.2023.1173805.

Kay, Jackie, Atoosa Kasirzadeh, and Shakir Mohamed. 2024. “Epistemic Injustice in Generative AI.” doi:10.48550/ARXIV.2408.11441.

Korzybski, Alfred, and Lance Strate. 2023. Science and Sanity: An Introduction to Non-Aristotelian Systems and General Semantics. Sixth edition. New York, New York, USA: Institute of General Semantics.

Kukutai, Tahu. 2023. “Indigenous Data Sovereignty—A New Take on an Old Theme.” Science 382(6674): eadl4664. doi:10.1126/science.adl4664.

Kukutai, Tahu, and John Taylor. 2016. “Data Sovereignty for Indigenous Peoples: Current Practice and Future Needs.” In Indigenous Data Sovereignty: Toward an Agenda, ANU Press.

Milano, Silvia, and Carina Prunkl. 2025. “Algorithmic Profiling as a Source of Hermeneutical Injustice.” Philosophical Studies 182(1): 185–203. doi:10.1007/s11098-023-02095-2.

Oguanaman, Chidi. 2019. Indigenous Data Sovereignty: Retooling Indigenous Resurgence for Development. Centre for INternational Governance Innovation. CIGI Papers. https://www.cigionline.org/static/documents/documents/no.234.pdf.

Peters, Dorian, and Tomasz Hollanek. 2025. “The METUX Matrix – A Design Framework for Human-Centred AI.” doi:10.14236/ewic/BCSHCI2025.1.

Russo, Stephanie, and Maui Hudson. 2022. CARE Principles for Indigenous Data Governance. Global Indigenous Data Alliance.

Sethy, Mayadhar. 2026. “Artificial Intelligence and Epistemic Justice: A Decolonial Turn through Indigenous Knowledge Systems.” AI & SOCIETY. doi:10.1007/s00146-026-02936-8.

Tabassi, Elham. 2023. Artificial Intelligence Risk Management Framework (AI RMF 1.0). Gaithersburg, MD: National Institute of Standards and Technology (U.S.). doi:10.6028/NIST.AI.100-1.